The government, in the opinion of many politicians and technicians in the United States, would be better off if it provided greater financial assistance to computer chips, which act as the brains or memory in everything from fighter planes to refrigerators. Proposals for tens of billions of dollars in government support are making their way through Congress, although in a shaky fashion.

One of the most important declared objectives is to increase the production of computer chips in the United States. This is unique in two ways: first, the United States is philosophically opposed to assisting its favoured sectors, and second, we typically conceive of American invention as being separate from where things are physically manufactured.

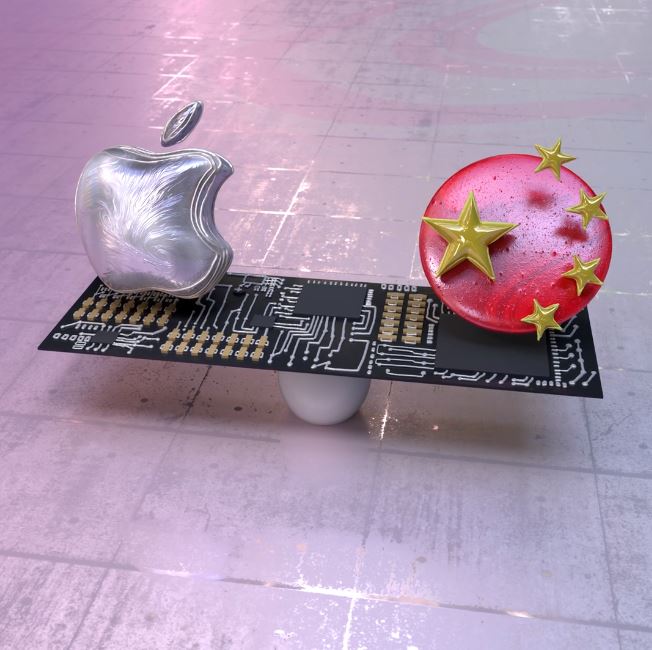

Although the vast majority of cellphones and computers are made outside of the United States, most of the brainpower and value associated with such technology is concentrated in this nation. Apple and Microsoft are based in the United States, while China has numerous manufacturing. That’s a fantastic trade for the United States.

Today, I’d want to go back to the fundamentals and raise the following questions: What are we attempting to accomplish by increasing the amount of chips produced on American soil? And, more importantly, are governments and the technology sector taking the most effective means to attain those objectives?

There are plausible arguments that chips are not the same as iPhones, and that it would be beneficial for Americans if more chips were created in the United States, even if it took several years to accomplish this goal. (It certainly would.) My interactions with technology and policy experts, however, have shown that those who advocate government assistance for the computer chip sector have a wide range of objectives for the industry.

Some analysts believe that increasing the number of computer chips manufactured in the United States would help to secure the country from China’s military and technological aspirations. Others want it to aid in the clearing of industrial bottlenecks in the automobile industry or to maintain the United States at the forefront of scientific research. The military prefers that chips be manufactured in the United States to protect fighter planes and laser weapons.

Atkinson informed me that he supported legislation now making its way through Congress that would provide government assistance for technological research and development, as well as public subsidies for U.S. chip manufacturing. However, he cautioned that the United States’ stance may be seen as regarding all domestic technology production as equally vital. “It would be fantastic if we could produce more solar panels, but I don’t believe it is a smart move,” he said.

It’s difficult to determine how vital it is to increase the number of chips produced in American facilities. I’m well aware that pouring public money into chip facilities that will take years to start producing items would not alleviate the chip shortages caused by the pandemic that made it difficult to purchase Ford F-150 pickup trucks and video game consoles.

No matter what the United States government does, Asian firms will continue to dominate the chip manufacturing industry. Even if output in the United States climbs to, say, 20% of total global production, subsequent pandemics or a crisis in Taiwan might still leave the United States’ economy susceptible to chip shortages in the future.

Specifically, what is occurring with computer chips is part of a larger debate that concerns both U.S. policy and our national mindset: What should the United States do about a future in which technology is becoming less American? We need policymakers to be asking themselves where this is important, where it isn’t, and where the government should concentrate its efforts in order to keep the nation running smoothly.